At this point I just want to have quality games. The graphics are beyond good enough. I don’t need to custom render a photo realistic action movie from my PC.

As an old fart, I actively dislike photorealistic graphics in most cases. I’m playing a game, and I kind of want it to look like a game, which generally means more surrealistic - exaggerated contrast, high saturation, low texture - than realistic. I’d rather play where the characters look like caricatures than my next door neighbor. And that doesn’t even go into great games with sprite-like graphics.

Enough is enough. You’ve saturated the art budget, it’s time to pay writers more.

You’ve saturated the art budget, it’s time to pay writers more.

I wish writing got more focus in general. There is a lot of theory to good writing that is often just completely ignored while the latest theoretical papers are taken into account for photorealistic rendering and such things that are much less important.

Yup, I honestly avoid the hyper-realistic games anyway. The closest I have gotten recently is the Yakuza series, and even that is very clearly a game, even in their high-quality renders. Gameplay is far more important than graphics quality. I don’t even care at all about RTX, just give me a fun game with an interesting story, and give the art team a lot of leeway on how to represent that.

I almost never buy games day 1 because they’re full of bugs (though they do look pretty), but do you know what game I’m excited to buy day 1? Zelda: Echoes of Wisdom. It’s basically the opposite of the big-budget, hyper-realistic games, and I’m all for it. I expect great gameplay and minimal bugs, and I’m willing to pay a premium for that.

I wish the big studios went back to putting fun first, instead of trying to compete on who can run my PC temps the highest.

Exactly!

I find myself coming back to cataclysm dark days ahead, caves of qud, dwarf fortress and other “low graphical” games because of the content.

There is a limit on how long beautiful graphics can keep me playing a boring game.

The day I understood that, I started to pickup games based on content, and gosh I am richer than ever!

Nonetheless, I have plenty of games to play when I feel like it.

I’ll be honest, I have trouble with low graphic games. But mediumish is fine. I still enjoy plonking monsters on Diablo 2. But I also enjoy Satisfactory and Snow Runner. I can’t conceive of a good reason graphics need to go further. Hell, Balder’s Gate 3 was beautiful and these guys want us to buy more graphics capacity? Why? It’s ridiculous.

Honestly if the gameplay and/or story are good enough, I don’t care one bit about hyper-realistic graphics

Hell, the more stylised the graphics of a game, generally the more interested I am.

Games like Obra Dinn, Lisa or Undertale that lean into the graphical limitations of the past are some of my all time favourites

It’s an underappreciated fact that art direction trumps graphical fidelity, and it’s not close. Grim Fandango for example is old as dirt but still holds up remarkably well thanks to its unique look and strong art direction, despite being challenged polygonally and resolution-wise etc. There are many other examples.

Exactly. And you can go pretty far with this as well. I really enjoyed Manifold Garden, which has pretty simple graphics, but definitely had a clear art direction and a very interesting 3D world to explore. There are probably more polygons on the face of a MC in a AAA game than in that entire game, yet I felt absolutely immersed while playing it.

Give me more unique experiences, not more GPU clock cycles…

I’ve been repeatedly disappointed with most modern games, so I’ve taken to emulating my old games and playing them on my laptop. It’s honestly a pretty good time, would recommend.

I’m getting to play games I loved that I haven’t touched in over a decade (MotorStorm, all the Ratchet and Clank games, the good Need for Speeds, etc). Plus if there were any games I wanted as a kid but didn’t have the money, I can buy most of them off eBay for cheap.The graphics of OpenTTD are beyond what is necessary for a good game.

It entirely depends on the genre. I probably would want a bit more detail on the faces than that if it was an emotional story line, say the kind of quality that To The Moon had.

Man who is heavily funded by AI research and use continues pushing that AI is necessary.

We certainly can. NVIDIA’s CEO realizes that the next buzzword that sells their cards (8K, 240hz, RTX++) isn’t going to run at good framerates without it.

That’s not to say AI doesn’t have its place in graphics, but it’s definitely a crutch for extremely high-end rendering performance (see RT) and a nice performance and quality gain for weaker (hopefully cheaper) graphics cards which support it.

As a gamer and developer I sort of fear AI taking the charm away from rendered games as DLSS/FSR embeds itself in games. I don’t want to see a race to the bottom in terms of internal, pre-DLSS resolution.

With you there. The workload on developers is reduced with these features, to a degree. But, instead of saved effort then getting directed to working on gameplay mechanics and such, to me it feels like many devs just see it as time/money saved, producing a game that looks and plays like one from 10 years ago, but runs like it’s cutting edge.

For instance, Abiotic Factor. That game on my RX 6800 XT runs at 40-50fps when at 100% resolution scaling at 1440p. Why? It’s got the fidelity of Half Life 1, why does it need temporal upscaling to run better? (I adore that game btw, Abiotic Factor is so much fun and worth getting even if playing alone!)

Not saying that’s how every dev is, I know there are plenty of games coming out nowadays that look and run great with creators that care. Just feels like there are too many games that rely on these machine learning based features too heavily, resulting in blurriness, smearing, shimmering, on top of poorer performance.

Just hoping the expectation that something like an RTX 4090 does not become the default cost-of-entry in order to play PC games because of this. It would be unfortunate for the ability of game developers to create and tune by-hand to become a lost art.

As a (non-game) developer, AI isn’t even that great at reducing my burden.

The organization is enthusiastic about AI, so we set up the Gitlab Copilot plugin for our development tools.

Even as “spicy autocomplete” only about one time in 4 or so it makes a useful suggestion.

There’s so much hallucination, trying to guess the next thing I want and usually deciding on something that came out of its shiny metal ass. It actually undermines the tool’s non-AI features, which pre-index the code to reliably complete fields and function names that actually exist.

I was going to defend “well ray tracing is definitely a time saver for game developers because they don’t have to manually fake lighting anymore.” Then I remembered ray tracing really isn’t AI at all… So yeah, maybe for artists that don’t need to use as detailed of textures because the AI models can “figure out” what it presumably should look like with more detail.

I’ve been using FSR as a user on Hunt Showdown and I’ve been very impressed with that as a 2k -> 4k upscale… It really helps me get the most out of my monitors and it’s approximately as convincing as the native 4k render (lower resolutions it’s not nearly as convincing for … but that’s kind of how these things go). I see the AI upscalers as a good way to fill in “fine detail” in a convincing enough way and do a bit better than traditional anti aliasing.

I really don’t see this as being a developer time saver though, unless you just permit yourself to write less performant code … and then you’re just going to get complaints in the gaming space. Writing the “electron” of gaming just doesn’t fly like it does with desktop apps.

I know this is a bit late, but copilot is only ok if used for code completion. I switched to the free tier of supermaven a month ago and it’s been way more helpful, as it can handle context better. Probably cuts coding in half and takes away a third of debugging.

Asking chatgpt for code has also become better, but imo still not reliable enough to regularly use. Just had some docker code written and it got it wrong 3 times so I gave up on that.

I get your point, AI can only save time if you know exactly what you’re doing and it will only be helpful sometimes. But when it is, it’s such a time saver.

Mostly it really is just a fancier auto-complete. It is most useful for situations where you want to essentially do the equivalent of copy&paste and then make changes in a few predictable places in each copy.

It is total crap at writing code itself to the point where you need to read the code and understand it to know it hasn’t screwed up, something that takes much, much longer than just writing it yourself.

Yeah AI as a dev is shit, but AI as a more thoughtful auto-complete is actually pretty great.

To me it looks like AIs currently are right at the boundary between being a tool and being a companion. But to be a full companion, they can’t be up against the boundary, they need to be well established and tried and tested as a companion to be used repeatedly, so we’re still a few decades out from that from what I can tell.

This was my fear when they announced they got an AI to generate doom in real time with no code (well code for the AI but they got the game via a prompt) yeh its amazing but they were running oldschool doom at 20fps on top end hardware with additional AI hallucinations.

Next thing you know we’ll be simulating an entire universe just to play bee simulator.

If you are inferring 32 pixels from 1 pixel that is because the model has been trained on billions of computed pixels. You cannot infer data in vacuum. The statement is bullshit.

Yeah but it does it much faster and more efficiently (according to him).

“im a fucking idiot and i want to put “Ai” on products to appeal to “new markets” because im greedy”

So I can pay $1 for a game and you can infer the other $32?

Man really wants that AI hype money.

Don’t believe anything this goon says. Don’t believe the claims of those who stand to profit from those same claims.

oh boy, back to 160p but with ai upscaling

“we can’t draw pixels anymore without making graphics cards stupidly expensive because of Reasons ™”

Fify

Where “Reasons” = “Profit,” probably.

Perhaps, we should be more concerned with maintaining and keeping relevant current hardware, over constant production of more powerful hardware just for the sake of doing it. We’ve hit a point of diminishing returns in terms of the value we’re actually being given by each new generation. Even the PS4 and Xbox One were able to produce gorgeous graphics.

Even the PS4 and Xbox One were able to produce gorgeous graphics.

But not always (or even usually IMO) at decent frame rates. I get major motion sickness and nauseous if a game doesn’t run near 60fps constantly, it’s really unfortunate.

Which is really less because of the consoles themselves and more because of the priorities of AAA game studios

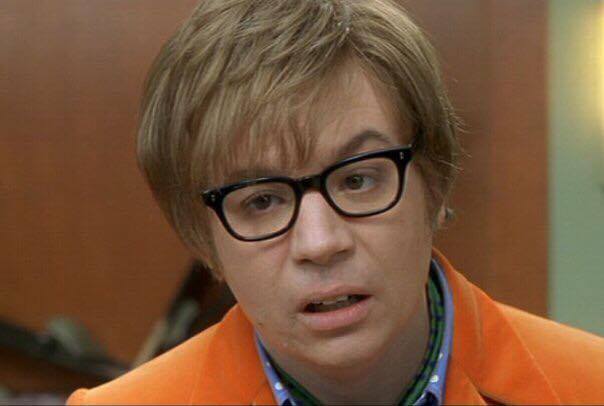

On the flipside, you give real intelligence 32 pixels and it infers photorealistic images:

(The textures are 32x32 pixels. Yes, that’s technically 1024 pixels, but shhh. 🙃)

I would go so far as to say if you get rid of the graphics completely and have text descriptions (think Dwarf Fortress which has many things that are not represented in its graphics at all, just in the textual descriptions) you fully free the imagination of the player.

Some things are just not representable graphically at all, my go to example is “the most beautiful woman he had ever seen”, easy to write in text, impossible to portray on screen in a way that every viewer will feel the same.

Idk, I really suck at imagining things like in DF, but I really enjoy the gameplay. I don’t need a good representation of what I’m interacting with, but I do need something.

Yeah, same. The game where that screenshot is from (DCSS) also has an ASCII mode, where that skeleton dragon would probably look like this:

DThe text log would say that a skeleton dragon appeared, and I could even imagine a skeleton dragon by itself quite easily, but when it comes to a whole room full of monsters, then it’s just a lot of info to keep track of. The small textures are almost like icons, in that they’re a compact way of telling me where which monster is.

Sounds just like Dwarf Fortress, which had been ASCII for decades before the Steam version added graphics (you could get icon packs before that point though). And honestly, I love DF and played quite a bit in the ASCII-only mode (I used to SSH into my server and run in actual ASCII mode), because the gameplay was worth it. Now that DF has a proper GUI, I’ll just use that, and I don’t mind that it looks like a game from the 90s.

“You are in a blue room. There’s a fountain. There are doors north and east. What do you do?”

DCSS <3

I mean we could do things like Arkham Knight, Flight Simulator, The Last of Us 2 and so on. Do we really need to do everything realtime or could we continue baking GI?

AI models are already kind of baked. Just not into data files, but into a bigass mathematical model.

I guess that is why AI runs like ass?

Maybe I don’t know enough about computer graphics, but in what world would you have/want to display a group of 33 pixels (one computed, 32 inferred)?!

Are we inferring 5 to the left and right and the row above and below in weird 3 x 11 strips?

I would assume that they are saying in a bigger scope and just happen to divide down to a ratio of 1 to 32.

Like rendering in 480p (307k pixels) and then generating 4k (8.3M pixels). Which results in like 1:27, sorta close enough to what he’s saying. The AI upscale like dlss and fsr are doing just that at less extreme upscale.

Why not just make the first pixel bigger?

Just make the screen one gargantuan pixel, and infer the other 2-million.

Don’t be silly, that wouldn’t work since the screen and the pixel have different aspect ratios.