cross-posted from: https://lemmy.world/post/1306474

This repository contains the research preview of LongLLaMA, a large language model capable of handling long contexts of 256k tokens or even more.

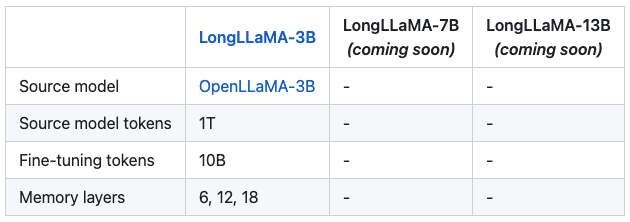

LongLLaMA is built upon the foundation of OpenLLaMA and fine-tuned using the Focused Transformer (FoT) method. We release a smaller 3B variant of the LongLLaMA model on a permissive license (Apache 2.0) and inference code supporting longer contexts on Hugging Face. Our model weights can serve as the drop-in replacement of LLaMA in existing implementations (for short context up to 2048 tokens). Additionally, we provide evaluation results and comparisons against the original OpenLLaMA models. Stay tuned for further updates.

This is an awesome resource to pair alongside the recent FoT breakthroughs covered in this paper/post here.

Focused Transformer: Contrastive Training for Context Scaling (FoT) presents a simple method for endowing language models with the ability to handle context consisting possibly of millions of tokens while training on significantly shorter input. FoT permits a subset of attention layers to access a memory cache of (key, value) pairs to extend the context length. The distinctive aspect of FoT is its training procedure, drawing from contrastive learning. Specifically, we deliberately expose the memory attention layers to both relevant and irrelevant keys (like negative samples from unrelated documents). This strategy incentivizes the model to differentiate keys connected with semantically diverse values, thereby enhancing their structure. This, in turn, makes it possible to extrapolate the effective context length much beyond what is seen in training.

LongLLaMA is an OpenLLaMA model finetuned with the FoT method, with three layers used for context extension. Crucially, LongLLaMA is able to extrapolate much beyond the context length seen in training: . E.g., in the passkey retrieval task, it can handle inputs of length.

This is an incredible advancement in context lengths for LLMs. Less than a month ago we were excited to celebrate 6k context lengths. We are now blowing these metrics out of the water. It is only a matter of time before compute and efficiency gains follow and support these new possibilities.

If you found any of this interesting, please consider subscribing to /c/FOSAI where I do my best to keep you up to date with the most important updates and developments in the space.

Want to get started with FOSAI, but don’t know how? Try starting with my Welcome Message and/or The FOSAI Nexus & Lemmy Crash Course to Free Open-Source AI.