I have a hard time seeing what we are currently calling “AI” evolve to address issues like this. It’s not real intelligence, it’s just text prediction. It seems fundamentally flawed for use-cases where you need 100% certainty of the answers being appropriate.

This isn’t the AI people think it is. And the only danger it poses is irresponsible use.

Part of why the NLP community is so excited about it is because text prediction as an optimization problem eventually necessitates some form of intelligence in order to reduce the loss, and the architectures we’re using scale nearly linearly in quality vs size and show no real signs of diminishing returns, meaning you can make them arbitrarily smart just by making them bigger.

I would encourage you to consider what you mean by “real” intelligence and “just text prediction”, because AI throws a lot of our assumptions out the window. Talk to GPT-4 in a chatbot cognitive architecture for a few hours and you get a sense of just how intelligent it can be (with the right prompting), but the architecture itself is literally incapable of “thinking” (some wiggle room for inter-layer states) - that is, internal, stateful, causal processes which drive external behavior. A chatbot CA can vaguely approximate it via chain of thought prompting, but without that it essentially has to guess what its thoughts “would” be if it had them, which is very weird and hard to understand intuitively.

In case it isn’t clear, what I mean by “cognitive architecture” is the machinery surrounding the language model which lets it interact with the world. A language model in isolation is a causal autoregressive inference engine that will happily autocomplete anything. They are not chatbots, only components in chatbots - that’s just the modality we’re most familiar with because ChatGPT broke ground, but it’s not their only or even most useful form. The LLM is comparable to a human broca’s area, which will generate an endless stream of language if you let it. It’s the neural circuitry around it which give rise to coherent thoughts and subjective experience.

To be able to accurately discuss these concepts, we need to change the language we use. Words like “intelligence”, “consciousness”, “sentience”, “sapience”, etc have always been incredibly vague, approaching completely undefined. They can’t be adequately applied to AI until they’ve been operationalized, such that you could objectively falsify whether or not they apply to a given system.

Very nicely put. If I observe any real person replying in text, what im seeing is essentially just them thinking about what word to put next and entering it on the keyboard. It is an extremely complex task. I’m not saying that state of the art language models are also mulling the same thoughts in their “minds” like we are but that they are solving the same problem. And our current paradigm of training these models show no sign of slowing progress so I understand the sentiment that calling these models just “text prediction machines” is too simplistic.

Stephen Wolfram’s article on how ChatGPT works was enlightening: https://writings.stephenwolfram.com/2023/02/what-is-chatgpt-doing-and-why-does-it-work/

Like you said, it’s just text prediction, using online content as the training ground.

This isn’t the AI people think it is.

It’s definitely not as good as people think it is. The best description I heard was that AI outputs “hallucinations” as it only needs to look plausible, it doesn’t have to be right.

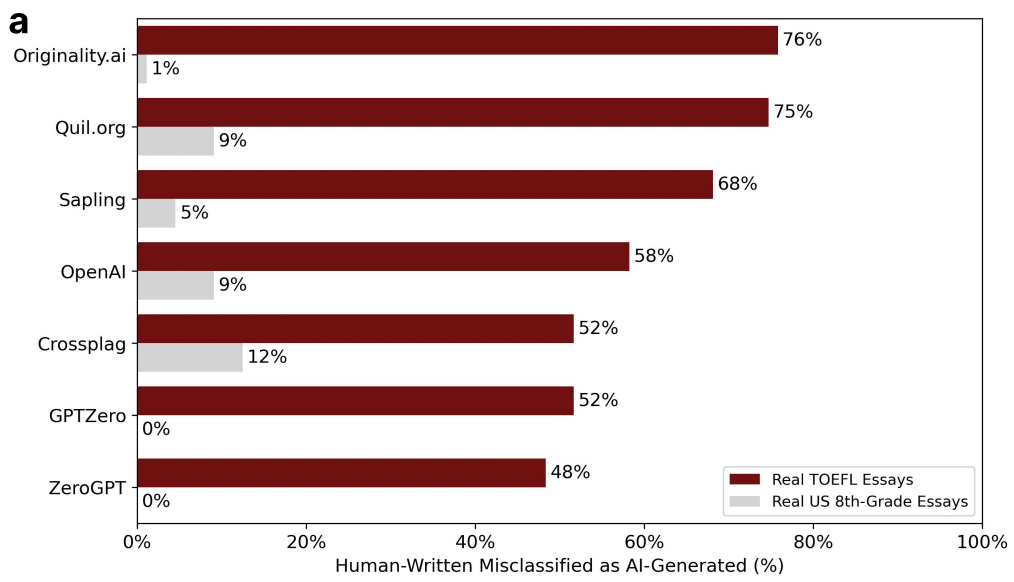

Which is why using it to detect cheating is a concern - you’d hope that it would only be used for a first pass only to be reviewed by a human later but some people are going to think that AI is infallible and leave it there.